I think in the future there will be a single API for all components. As a result, you often have to work with several of these interfaces in parallel that is a certain disadvantage. Each of these APIs was better than the previous ones. They all appeared not immediately, but consistently. For example, there are three APIs for working with data now.

Because while this book was being written, many changes in Spark happened. If you buy a book about Spark, you risk getting outdated knowledge. That was relevant a year or two ago, now it has been replaced by more optimal components.

Big data analysis with apache spark code#

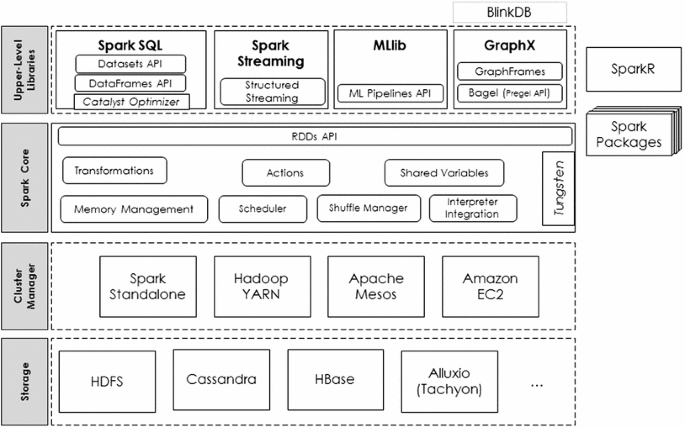

Most of the code base and function signatures require reading the Scala code with a dictionary.Īnd last but not at least, even a small home project is expensive if you test it in the cluster. Things that work on a small dataset (test methods and some JVM settings) often work differently on big data in production.Īnother possible challenge for a Java or Python developer is that you need to learn Scala. In fact, challenges are typical for any big data framework. What challenges do you usually face while working with Spark? Possible challenges Data processing from devices and sensors.Expansion and retention of the audience.Here is an indicative (but not exhaustive) selection of some practical situations where a high-speed, diverse and volumetric processing of big data is required for which Spark is so well suited: Online Marketing Potentially, the coverage of Spark is very extensive. For example, transmit, filtering and aggregating a flow from Kafka, adding it to MySQL, etc. Spark can be used as a quick filter to reduce the dimension of the input data. There is a huge amount of Spark connectors. It copes with the problem of “everything with everything” integration.By the way, data scientists may use Spark features through R- and Python-connectors. It solves the problem of machine learning and distributed data integration.Spark helps to create reports quickly, perform aggregations of a large amount of both static data and streams.What can you do with Spark? Apache Spark Application planning, distribution, and tracking of tasks in a clusterĬluster Managers are used for the management of the Spark work in a cluster of servers.memory management and recovery after failures.Spark Core is a basic engine for a large-scale parallel and distributed data processing. The library provides a universal tool for ETL, research analysis and graph-based iterative computing. GraphX is a library for manipulating graphs and performing parallel operations with them. MLlib is a machine learning library that provides various algorithms designed for horizontal scaling on a cluster for classification, regression, clustering, co-filtering, etc. Such data can be log files of the working web server (for example, processed by Apache Flume or placed on HDFS / S3), information from social networks (for example, Twitter), as well as various message queues such as Kafka. Spark Streaming supports real-time streaming processing. There is even a possibility to join data across the mentioned sources. Both help to access a variety of data sources, including Hive, Avro, Parquet, ORC, JSON, and JDBC in the usual way. SQL & DataFrames is a Spark component that supports data querying using either SQL or DataFrame API. Spark main components Apache Spark components It is well integrated with the Hadoop ecosystem and data sources.

Natively Spark supports Scala, Python, and Java. Furthermore, the code is written faster because here in Spark we have more high-level operators at our disposal. Comparing to Hadoop MapReduce, another data processing platform, Spark accelerates the programs operating in memory by more than 100 times, and on drive – by more than 10 times.

Big data analysis with apache spark free#

The project is being developed by the free community, currently, it is the most active of the Apache projects. What is Apache Spark?Īpache Spark is a unified analytics engine for large-scale data processing. According to the Spark FAQ, the largest of these clusters have more than 8,000 nodes. Many organizations operate Spark in clusters, involving thousands of nodes. Nowadays Spark is used in lots of leading companies such as Amazon, eBay, Nasa, etc. Today I’ll focus your attention on Spark. All this fascinated me so much that I devoted all the subsequent time to studying these and several related technologies. At the same time, I got acquainted with the Scala programming language in which Spark was written. It’s a DMP platform with unique long-lasting cross-device technology. I first heard about Spark just over 2 years ago when I started working on the project related to consented tracking of consumer data, building audiences, creating target ad campaigns and aggregating detailed analytics. Have you heard of Apache Spark? It is a leading framework for processing big data.